Complete History of Computers From Early Machines to Modern PCs

Published: 9 Mar 2026

How did computers move from simple calculating machines to the powerful devices we use every day? This question leads many learners to search for the complete history of computers. I had the same question when I started learning computing basics and felt confused by so many names, dates, and inventions.

At first, the timeline seemed overwhelming, from mechanical calculators to ENIAC and then to personal computers. Later, I studied each stage carefully, learning how each innovation solved real problems and improved speed, accuracy, and storage.

Understanding this history helped me connect old inventions to modern systems and appreciate why today’s computers work so fast and efficiently. Once I followed the full timeline, the story of computers became clear, and I could explain it to others easily.

What History of Computers Truly Means

The term computer did not always mean a machine. Long ago, a computer was a person who did calculations by hand. Over time, tools and machines took over this work. Today, a computer means an electronic device that processes data fast and accurately.

In the early days, people used manual methods and simple tools like counting sticks and the abacus. Later came mechanical devices that could add or multiply. These ideas slowly led to modern digital computers.

The history of computers is not just about dates. It is the story of how thinking and technology changed our world. It focuses on ideas, people, and moments that shaped computing.

- Big ideas that solved calculation problems

- New inventions that made work faster

- Scientists and engineers who built early machines

- Breakthroughs that changed daily life

Understanding this history matters even today. It helps us appreciate the technology we use every day. It also shows how innovation grows step by step and inspires future developments.

- Helps value modern computers and smartphones

- Explains why systems work the way they do

- Builds curiosity about future technology

- Connects past ideas to today’s digital world

Pre-Computer Era: Ancient Tools & Concepts

Long before modern computers existed, humans still needed ways to count, measure, and solve problems. For trade, astronomy, and record-keeping, they created simple tools and smart ideas. These early methods were the first steps toward today’s powerful computers. They show how human needs pushed the growth of computing concepts.

Abacus and Early Counting Tools

The abacus is one of the oldest calculating tools. It uses beads on rods to help people add, subtract, multiply, and divide. Ancient civilizations used it in markets and schools. Other tools were even simpler but very useful.

- Abacus used beads to do arithmetic

- Tally marks helped keep simple counts

- Knotted ropes recorded numbers and events

- Counting boards organized numbers for trade

These tools were important because they trained people to think in steps. They made calculations faster and more reliable. This was the beginning of structured problem-solving.

Early Analog Devices & Binary Ideas

Early analog devices measured continuous values like time or movement. For example, sundials showed time using shadows, and mechanical tools measured motion. At the same time, thinkers explored simple yes/no ideas.

- Sundials measured time using the sun

- Mechanical tools showed movement and change

- Binary ideas used two states, like yes/no

- These ideas later shaped digital computers

19th Century Pioneers Babbage & Lovelace

The 19th century set the stage for modern computing. Visionaries like Charles Babbage and Ada Lovelace imagined machines that could perform calculations automatically and follow instructions. Their ideas laid the foundation for today’s computers.

Charles Babbage Difference & Analytical Engines

Charles Babbage designed machines to automate calculations. His Difference Engine could solve mathematical tables faster and more accurately than humans. Later, he created the concept of the Analytical Engine, a general-purpose machine that could follow instructions. Babbage’s vision included using punch cards to program tasks, a precursor to modern coding.

- Difference Engine automated repetitive calculations

- Analytical Engine designed for any kind of computation

- Used punch cards to input instructions

- Inspired the idea of programmable machines

Think of Babbage as a master inventor imagining a “mechanical brain” that could do many tasks, not just one.

Ada Lovelace First Computer Programmer

Ada Lovelace worked closely with Babbage’s machines. She wrote what is now recognized as the first computer program, showing how instructions could guide the machine. She also realized that computers could do more than math they could manipulate symbols, create music, or even generate art with the right instructions.

- Wrote the first program for Babbage’s machines

- Explained how machines could follow symbolic instructions

- Saw potential beyond numbers and calculations

- Pioneered the idea of programming as we know it today

Early Electronic & Theoretical Breakthroughs

The 20th century brought major advances in both ideas and machines. Thinkers imagined what computers could do, while engineers built the first electronic systems. Together, these breakthroughs laid the groundwork for modern computing.

Alan Turing’s Universal Machine

Alan Turing proposed a theoretical machine that could solve any problem if given the right instructions. This idea, called the Universal Turing Machine, was not a physical device but a concept showing how computation could work. It became the foundation for all modern computers.

- Conceptual machine for solving problems

- Could follow step-by-step instructions

- Showed how any calculation could be automated

- Laid groundwork for computer science theory

Think of Turing’s machine as a “blueprint for thinking machines” — a guide for what computers could do.

Early Electronic Computers (ENIAC, ABC, etc.)

The first practical electronic computers appeared in the 1940s. Machines like ENIAC and ABC used vacuum tubes instead of mechanical parts. Unlike electromechanical devices, fully electronic computers were faster and more reliable.

- ENIAC and ABC were among the first electronic computers

- Replaced moving parts with electrical circuits

- Could perform calculations much faster than humans

- Early machines showed what theory could achieve in practice

These computers were huge, filling entire rooms, but they proved that electronic computation was possible.

World War II Computing Contributions

Wartime needs accelerated both theory and hardware. Machines were built to break codes and calculate artillery tables. This pressure led to faster development and practical applications of electronic computers.

- Code-breaking machines like Colossus sped up encryption tasks

- Military calculations required faster computing

- War needs drove innovation in both theory and electronics

- Lessons from wartime machines influenced post-war computers

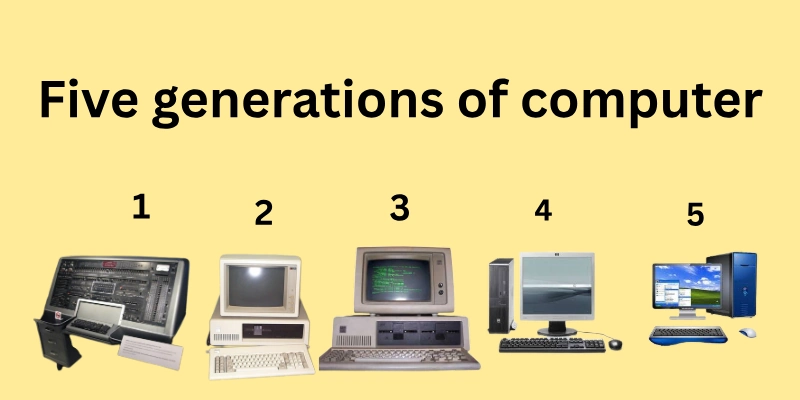

Computer Generations (With Clear Sections)

Computers did not appear overnight. They evolved through generations, each marked by new technology that changed how machines worked. Understanding these generations helps beginners see how we moved from giant mechanical calculators to modern AI-powered devices

Mechanical Era

The earliest computers were mechanical machines, like Charles Babbage’s Difference and Analytical Engines. They used gears, levers, and cogs to perform calculations. These machines were slow but revolutionary for their time.

- Mostly mechanical parts like gears and wheels

- Performed arithmetic and mathematical tables

- Inspired the concept of programmable machines

- Early foundation for later electronic computers

First Electronic Computers

The first electronic computers used vacuum tubes instead of moving parts. Machines like ENIAC were much faster than mechanical devices but very large and consumed a lot of power. They were used for military calculations and scientific research.

- Vacuum tubes replaced mechanical components

- Faster calculations and larger problem-solving

- Examples: ENIAC, ABC

- Important for wartime and scientific advancements

Transistor Era

Transistors replaced vacuum tubes in the 1950s–1960s. They were smaller, more reliable, and generated less heat. This allowed computers to become more compact and accessible.

- Transistors acted as tiny electronic switches

- Smaller and more energy-efficient

- Examples: IBM 1401, early business computers

- Made computers more practical for businesses

Integrated Circuits (ICs)

Integrated Circuits combined many transistors on a single chip. This innovation made computers smaller, faster, and cheaper. Miniaturization led to wider adoption in industry and education.

- Multiple transistors on a single chip

- Increased speed and efficiency

- Examples: Early minicomputers like PDP-8

- Enabled smaller and more reliable machines

Microprocessor & Personal Computers

The microprocessor put the CPU on a single chip, making personal computers possible. PCs brought computing to homes, schools, and offices. Software and user-friendly interfaces became more common.

- CPU on a single chip

- Rise of PCs for everyday use

- Examples: IBM PC, Apple II

- Made computing accessible to everyone

AI, Quantum & Beyond

Today, computers are exploring artificial intelligence, quantum computing, and futuristic concepts. Machines can learn, solve complex problems, and process massive data at incredible speeds. This era focuses on innovation and new possibilities.

- AI for learning and decision-making

- Quantum computers for ultra-fast calculations

- Examples: Google’s quantum experiments, AI assistants

- Shaping the future of technology and society

Personal Computers Revolution (1970s–90s)

The 1970s to 1990s marked a major shift in computing. Computers moved from labs and big offices into homes, schools, and small businesses. This era made technology more accessible and changed the way people worked, learned, and communicated.

Milestones in Hardware

The Intel 4004, released in 1971, was the first microprocessor, putting the CPU on a single chip. The Apple II became a popular home computer, offering an easy way for families and schools to use technology. The IBM PC standardized business computing, making software and hardware more compatible.

- Intel 4004: first CPU on a chip

- Apple II: brought computers to homes and schools

- IBM PC: standard for office and business use

- Made computers smaller, faster, and more reliable

Rise of GUI & Software Ecosystems

Graphical user interfaces (GUIs) like Mac OS and Windows made computers easier for beginners. Instead of typing complex commands, users could click icons and menus. Software libraries also grew, offering applications for education, business, and entertainment.

- GUIs simplified computer interaction

- Software expanded what computers could do

- Examples: word processors, spreadsheets, educational programs

- Helped beginners use computers confidently

Key Figures

Visionaries like Steve Jobs, Steve Wozniak, and Bill Gates drove this revolution. Jobs and Wozniak created the Apple computers, combining design and usability. Gates led Microsoft, providing software that made PCs widely usable. Their innovations made computers part of daily life.

- Steve Jobs & Steve Wozniak: Apple personal computers

- Bill Gates: Microsoft software for PCs

- Pioneered user-friendly design and widespread adoption

- Shaped the modern personal computing world

Internet Era & Modern Computing

The Internet and modern technologies transformed computing from isolated personal machines into a connected, intelligent ecosystem. Today, computers, phones, and other devices communicate, share data, and help people work, learn, and play more efficiently than ever.

ARPANET → World Wide Web → Mobile Computing

The journey started with ARPANET, a network for sharing information between research computers. This evolved into the World Wide Web, making websites and online resources accessible to everyone. Later, mobile computing allowed smartphones and tablets to access the internet anywhere, anytime.

- ARPANET: early network for research

- WWW: made global information easily accessible

- Mobile devices: internet on phones and tablets

- Revolutionized how people access and share data

Cloud Computing, Smartphones & Social Web

Cloud computing lets people store files online and access them from any device. Smartphones combined computing and communication in one device. Social networks and mobile apps changed how people connect, work, and entertain themselves daily.

- Cloud storage: easy access and backup of files

- Mobile apps: tools for work, learning, and fun

- Social media: connects people worldwide

- Changed daily life, work, and communication patterns

AI, Machine Learning & Quantum Computing Trends

Modern computing explores AI, machine learning, and quantum computing. AI assistants can answer questions and help with tasks. Predictive analytics uses data to make smarter decisions. Quantum computers, though experimental, promise extremely fast problem-solving for complex tasks.

- AI assistants like Siri or Alexa

- Machine learning for recommendations and predictions

- Quantum computing for advanced research

- Shows the future potential of intelligent computing

3000 BCE – Early Calculating Tools

- Event: Abacus and other counting tools

- Impact: Helped humans perform arithmetic; first step toward computing

1822 – Babbage’s Difference Engine

- Event: Charles Babbage designed a machine to automate calculations

- Impact: Showed how machines could perform complex math reliably

1843 – Ada Lovelace’s First Program

- Event: Ada Lovelace wrote instructions for Babbage’s Analytical Engine

- Impact: First concept of a computer program; showed machines could follow symbolic instructions

1945 – ENIAC

- Event: First electronic general-purpose computer

- Impact: Much faster than humans or mechanical machines; used for calculations and research

1970s–80s – First Personal Computers

- Event: Apple II, IBM PC, and similar machines became available

- Impact: Brought computers to homes and offices; started the PC revolution

1980s–90s – Internet & World Wide Web

- Event: ARPANET evolved into the WWW

- Impact: Connected computers globally; enabled information sharing and online communication

2000s – Mobile Computing & Cloud Era

- Event: Smartphones, tablets, and cloud services emerged

- Impact: Allowed access to data and apps anywhere; transformed daily work and communication

2010s–Present – AI, Machine Learning & Quantum Computing

- Event: Advanced AI assistants, predictive analytics, and experimental quantum computers

- Impact: Made computers smarter; opened possibilities for future innovations and problem-solving

Conclusion

The history of computers shows an incredible journey from manual calculations with tools like the abacus to today’s AI-powered and quantum computing systems. Along the way, pioneers like Babbage and Lovelace laid the foundation, and breakthroughs like ENIAC, personal computers, and the Internet transformed how we live and work.

Personal computers brought technology into homes and offices, while the Internet and mobile devices connected the world. Modern computing continues to grow with AI, machine learning, cloud systems, and quantum research, shaping everything from communication to problem-solving.

FAQs: Complete History of Computers

Let’s go through some quick FAQs that people often ask about complete history of computers Each answer gives you a clear and easy explanation.

The “first computer” can mean different things. Charles Babbage designed the first mechanical computer, the Difference Engine, in the 1800s. Later, ENIAC (1945) became the first large-scale electronic computer. Each step built on earlier ideas, leading to the computers we use today.

The first programmable machine was Babbage’s Analytical Engine, created in the 1830s. Ada Lovelace wrote the first instructions for it, considered the first computer program. This showed that machines could follow step-by-step instructions, not just calculate numbers.

Early computers were huge and used vacuum tubes, filling entire rooms. The invention of transistors and integrated circuits made computers smaller, faster, and more reliable. Microprocessors and personal computers eventually led to laptops and smartphones, putting powerful computing in the palm of your hand.

Important figures include Charles Babbage (mechanical computers), Ada Lovelace (first programmer), Alan Turing (theoretical computing), and John von Neumann (modern computer architecture). Later, Steve Jobs, Steve Wozniak, and Bill Gates helped bring PCs to homes and businesses. Each pioneer contributed ideas or inventions that shaped computing.

The Internet connected computers worldwide, allowing people to share information easily. It led to the World Wide Web, mobile access, cloud storage, and social networks. This transformed communication, learning, work, and entertainment globally.

The Internet connected computers worldwide, allowing people to share information easily. It led to the World Wide Web, mobile access, cloud storage, and social networks. This transformed communication, learning, work, and entertainment globally.

Personal computers made computing accessible to homes, schools, and offices. Tasks like writing, accounting, and design became faster and easier. PCs also created opportunities for gaming, learning, and creativity, making technology part of everyday life.

Personal computers made computing accessible to homes, schools, and offices. Tasks like writing, accounting, and design became faster and easier. PCs also created opportunities for gaming, learning, and creativity, making technology part of everyday life.

AI helps computers learn from data, make predictions, and assist with tasks. Examples include virtual assistants like Siri or Alexa, recommendation systems on apps, and automated problem-solving in industries. This shows how computing has become smarter and more adaptive.

Learning history helps beginners see how technology developed and why computers work the way they do. It also inspires curiosity about future innovations and shows how ideas from the past shaped modern devices.

The future includes AI, quantum computing, and highly connected systems. These technologies aim to solve complex problems faster and smarter. Understanding the past helps us appreciate the future possibilities in technology and innovation.

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks